Appendix: Maximum Likelihood Estimation Example: Difference between revisions

Lisa Hacker (talk | contribs) No edit summary |

|||

| (13 intermediate revisions by 3 users not shown) | |||

| Line 1: | Line 1: | ||

{{Template: | {{Template:LDABOOK|Appendix B|Maximum Likelihood Estimation Example}} | ||

==MLE Statistical Background== | ==MLE Statistical Background== | ||

If <math>x</math> is a continuous random variable with | If <math>x\,\!</math> is a continuous random variable with ''pdf'': | ||

::<math>f(x;{{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}}),</math> | ::<math>\begin{align} | ||

f(x;{{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}}), | |||

\end{align}\,\!</math> | |||

where <math>{{\theta }_{1}},</math> <math>{{\theta }_{2}},</math><math>...,</math> <math>{{\theta }_{k}}</math> are <math>k</math> unknown constant parameters that need to be estimated, conduct an experiment and obtain <math>N</math> independent observations, <math>{{x}_{1}},</math> <math>{{x}_{2}},</math><math>...,</math> <math>{{x}_{N}}</math>, which correspond in the case of life data analysis to failure times. The likelihood function (for complete data) is given by: | where <math>{{\theta }_{1}},\,\!</math> <math>{{\theta }_{2}},\,\!</math><math>...,\,\!</math> <math>{{\theta }_{k}}\,\!</math> are <math>k\,\!</math> unknown constant parameters that need to be estimated, conduct an experiment and obtain <math>N\,\!</math> independent observations, <math>{{x}_{1}},\,\!</math> <math>{{x}_{2}},\,\!</math><math>...,\,\!</math> <math>{{x}_{N}}\,\!</math>, which correspond in the case of life data analysis to failure times. The likelihood function (for complete data) is given by: | ||

::<math>L({{x}_{1}},{{x}_{2}},...,{{x}_{N}}|{{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}})=L=\underset{i=1}{\overset{N}{\mathop \prod }}\,f({{x}_{i}};{{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}})</math> | ::<math>L({{x}_{1}},{{x}_{2}},...,{{x}_{N}}|{{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}})=L=\underset{i=1}{\overset{N}{\mathop \prod }}\,f({{x}_{i}};{{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}})\,\!</math> | ||

::<math>i=1,2,...,N</math> | ::<math>\begin{align} | ||

i=1,2,...,N | |||

\end{align}\,\!</math> | |||

The logarithmic likelihood function is: | The logarithmic likelihood function is: | ||

::<math>\Lambda =\ln L=\underset{i=1}{\overset{N}{\mathop \sum }}\,\ln f({{x}_{i}};{{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}})</math> | ::<math>\Lambda =\ln L=\underset{i=1}{\overset{N}{\mathop \sum }}\,\ln f({{x}_{i}};{{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}})\,\!</math> | ||

The maximum likelihood estimators (MLE) of <math>{{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}},\,\!</math> are obtained by maximizing <math>L\,\!</math> or <math>\Lambda .\,\!</math> | |||

By maximizing <math>\Lambda ,\,\!</math> which is much easier to work with than <math>L\,\!</math>, the maximum likelihood estimators (MLE) of <math>{{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}}\,\!</math> are the simultaneous solutions of <math>k\,\!</math> equations such that: | |||

::<math>\frac{\partial (\Lambda )}{\partial {{\theta }_{j}}}=0,j=1,2,...,k\,\!</math> | |||

::<math>\frac{\partial (\Lambda )}{\partial {{\theta }_{j}}}=0,j=1,2,...,k</math> | |||

Even though it is common practice to plot the MLE solutions using median ranks (points are plotted according to median ranks and the line according to the MLE solutions), this is not completely accurate. As it can be seen from the equations above, the MLE method is independent of any kind of ranks. For this reason, many times the MLE solution appears not to track the data on the probability plot. This is perfectly acceptable since the two methods are independent of each other, and in no way suggests that the solution is wrong. | Even though it is common practice to plot the MLE solutions using median ranks (points are plotted according to median ranks and the line according to the MLE solutions), this is not completely accurate. As it can be seen from the equations above, the MLE method is independent of any kind of ranks. For this reason, many times the MLE solution appears not to track the data on the probability plot. This is perfectly acceptable since the two methods are independent of each other, and in no way suggests that the solution is wrong. | ||

==Illustrating | ==Illustrating MLE Using the Exponential Distribution== | ||

*To estimate <math>\widehat{\lambda }</math> for a sample of <math>n</math> units (all tested to failure), first obtain the likelihood function: | *To estimate <math>\widehat{\lambda }\,\!</math> for a sample of <math>n\,\!</math> units (all tested to failure), first obtain the likelihood function: | ||

::<math>\begin{align} | ::<math>\begin{align} | ||

| Line 33: | Line 35: | ||

= & \underset{i=1}{\overset{n}{\mathop \prod }}\,\lambda {{e}^{-\lambda {{t}_{i}}}} \\ | = & \underset{i=1}{\overset{n}{\mathop \prod }}\,\lambda {{e}^{-\lambda {{t}_{i}}}} \\ | ||

= & {{\lambda }^{n}}\cdot {{e}^{-\lambda \underset{i=1}{\overset{N}{\mathop{\sum }}}\,{{t}_{i}}}} | = & {{\lambda }^{n}}\cdot {{e}^{-\lambda \underset{i=1}{\overset{N}{\mathop{\sum }}}\,{{t}_{i}}}} | ||

\end{align}</math> | \end{align}\,\!</math> | ||

*Take the natural log of both sides: | *Take the natural log of both sides: | ||

::<math>\Lambda =\ln (L)=n\ln (\lambda )-\lambda \underset{i=1}{\overset{n}{\mathop \sum }}\,{{t}_{i}}.</math> | ::<math>\Lambda =\ln (L)=n\ln (\lambda )-\lambda \underset{i=1}{\overset{n}{\mathop \sum }}\,{{t}_{i}}.\,\!</math> | ||

*Obtain <math>\tfrac{\partial \Lambda }{\partial \lambda }</math>, and set it equal to zero: | *Obtain <math>\tfrac{\partial \Lambda }{\partial \lambda }\,\!</math>, and set it equal to zero: | ||

::<math>\frac{\partial \Lambda }{\partial \lambda }=\frac{n}{\lambda }-\underset{i=1}{\overset{n}{\mathop \sum }}\,{{t}_{i}}=0</math> | ::<math>\frac{\partial \Lambda }{\partial \lambda }=\frac{n}{\lambda }-\underset{i=1}{\overset{n}{\mathop \sum }}\,{{t}_{i}}=0\,\!</math> | ||

*Solve for <math>\widehat{\lambda }</math> or: | *Solve for <math>\widehat{\lambda }\,\!</math> or: | ||

::<math>\hat{\lambda }=\frac{n}{\underset{i=1}{\overset{n}{\mathop{\sum }}}\,{{t}_{i}}}</math> | ::<math>\hat{\lambda }=\frac{n}{\underset{i=1}{\overset{n}{\mathop{\sum }}}\,{{t}_{i}}}\,\!</math> | ||

'''Notes About <math>\widehat{\lambda }\,\!</math>''' | |||

Note that the value of <math>\widehat{\lambda }\,\!</math> is an estimate because if we obtain another sample from the same population and re-estimate <math>\lambda \,\!</math>, the new value would differ from the one previously calculated. In plain language, <math>\hat{\lambda }\,\!</math> is an estimate of the true value of ... How close is the value of our estimate to the true value? To answer this question, one must first determine the distribution of the parameter, in this case <math>\lambda \,\!</math>. This methodology introduces a new term, confidence bound, which allows us to specify a range for our estimate with a certain confidence level. The treatment of confidence bounds is integral to reliability engineering, and to all of statistics. (Confidence bounds are covered in [[Confidence Bounds]].) | |||

==Illustrating MLE Using the Normal Distribution== | |||

To obtain the MLE estimates for the mean, <math>\bar{T},\,\!</math> and standard deviation, <math>{{\sigma }_{T}},\,\!</math> for the normal distribution, start with the ''pdf'' of the normal distribution which is given by: | |||

::<math>f(T)=\frac{1}{{{\sigma }_{T}}\sqrt{2\pi }}{{e}^{-\tfrac{1}{2}{{\left( \tfrac{T-\bar{T}}{{{\sigma }_{T}}} \right)}^{2}}}}\,\!</math> | |||

If <math>{{T}_{1}},{{T}_{2}},...,{{T}_{N}}</math> are known times-to-failure (and with no suspensions), then the likelihood function is given by: | If <math>{{T}_{1}},{{T}_{2}},...,{{T}_{N}}\,\!</math> are known times-to-failure (and with no suspensions), then the likelihood function is given by: | ||

::<math>L({{T}_{1}},{{T}_{2}},...,{{T}_{N}}|\bar{T},{{\sigma }_{T}})=L=\underset{i=1}{\overset{N}{\mathop \prod }}\,\left[ \frac{1}{{{\sigma }_{T}}\sqrt{2\pi }}{{e}^{-\tfrac{1}{2}{{\left( \tfrac{{{T}_{i}}-\bar{T}}{{{\sigma }_{T}}} \right)}^{2}}}} \right]</math> | ::<math>L({{T}_{1}},{{T}_{2}},...,{{T}_{N}}|\bar{T},{{\sigma }_{T}})=L=\underset{i=1}{\overset{N}{\mathop \prod }}\,\left[ \frac{1}{{{\sigma }_{T}}\sqrt{2\pi }}{{e}^{-\tfrac{1}{2}{{\left( \tfrac{{{T}_{i}}-\bar{T}}{{{\sigma }_{T}}} \right)}^{2}}}} \right]\,\!</math> | ||

::<math>L=\frac{1}{{{({{\sigma }_{T}}\sqrt{2\pi })}^{N}}}{{e}^{-\tfrac{1}{2}\underset{i=1}{\overset{N}{\mathop{\sum }}}\,{{\left( \tfrac{{{T}_{i}}-\bar{T}}{{{\sigma }_{T}}} \right)}^{2}}}}</math> | ::<math>L=\frac{1}{{{({{\sigma }_{T}}\sqrt{2\pi })}^{N}}}{{e}^{-\tfrac{1}{2}\underset{i=1}{\overset{N}{\mathop{\sum }}}\,{{\left( \tfrac{{{T}_{i}}-\bar{T}}{{{\sigma }_{T}}} \right)}^{2}}}}\,\!</math> | ||

then: | then: | ||

::<math>\Lambda =\ln L=-\frac{N}{2}\ln (2\pi )-N\ln {{\sigma }_{T}}-\frac{1}{2}\underset{i=1}{\overset{N}{\mathop \sum }}\,\left( \frac{{{T}_{i}}-\bar{T}}{{{\sigma }_{T}}} \right)_{}^{2}</math> | ::<math>\Lambda =\ln L=-\frac{N}{2}\ln (2\pi )-N\ln {{\sigma }_{T}}-\frac{1}{2}\underset{i=1}{\overset{N}{\mathop \sum }}\,\left( \frac{{{T}_{i}}-\bar{T}}{{{\sigma }_{T}}} \right)_{}^{2}\,\!</math> | ||

Then taking the partial derivatives of <math>\Lambda </math> with respect to each one of the parameters and setting them equal to zero yields: | Then taking the partial derivatives of <math>\Lambda \,\!</math> with respect to each one of the parameters and setting them equal to zero yields: | ||

::<math>\frac{\partial (\Lambda )}{\partial \bar{T}}=\frac{1}{\sigma _{T}^{2}}\underset{i=1}{\overset{N}{\mathop \sum }}\,({{T}_{i}}-\bar{T})=0</math> | ::<math>\frac{\partial (\Lambda )}{\partial \bar{T}}=\frac{1}{\sigma _{T}^{2}}\underset{i=1}{\overset{N}{\mathop \sum }}\,({{T}_{i}}-\bar{T})=0\,\!</math> | ||

and: | and: | ||

::<math>\frac{\partial (\Lambda )}{\partial {{\sigma }_{T}}}=-\frac{N}{{{\sigma }_{T}}}+\frac{1}{\sigma _{T}^{3}}\underset{i=1}{\overset{N}{\mathop \sum }}\,{{({{T}_{i}}-\bar{T})}^{2}}=0</math> | ::<math>\frac{\partial (\Lambda )}{\partial {{\sigma }_{T}}}=-\frac{N}{{{\sigma }_{T}}}+\frac{1}{\sigma _{T}^{3}}\underset{i=1}{\overset{N}{\mathop \sum }}\,{{({{T}_{i}}-\bar{T})}^{2}}=0\,\!</math> | ||

Solving the above two derivative equations simultaneously yields: | Solving the above two derivative equations simultaneously yields: | ||

::<math>\bar{T}=\frac{1}{N}\underset{i=1}{\overset{N}{\mathop \sum }}\,{{T}_{i}}</math> | ::<math>\bar{T}=\frac{1}{N}\underset{i=1}{\overset{N}{\mathop \sum }}\,{{T}_{i}}\,\!</math> | ||

and: | and: | ||

| Line 89: | Line 89: | ||

& & \\ | & & \\ | ||

& {{{\hat{\sigma }}}_{T}}= & \sqrt{\frac{1}{N}\underset{i=1}{\overset{N}{\mathop \sum }}\,{{({{T}_{i}}-\bar{T})}^{2}}} | & {{{\hat{\sigma }}}_{T}}= & \sqrt{\frac{1}{N}\underset{i=1}{\overset{N}{\mathop \sum }}\,{{({{T}_{i}}-\bar{T})}^{2}}} | ||

\end{align}</math> | \end{align}\,\!</math> | ||

It should be noted that these solutions are valid only for data with no suspensions, (i.e., all units are tested to failure). In the case where suspensions are present or all units are not tested to failure, the methodology changes and the problem becomes much more complicated. | |||

'''Illustrating with an Example of the Normal Distribution''' | '''Illustrating with an Example of the Normal Distribution''' | ||

| Line 103: | Line 101: | ||

= & \frac{10+20+30+40+50}{5} \\ | = & \frac{10+20+30+40+50}{5} \\ | ||

= & 30 | = & 30 | ||

\end{align}</math> | \end{align}\,\!</math> | ||

The standard deviation estimate then would be: | The standard deviation estimate then would be: | ||

| Line 112: | Line 109: | ||

= & \sqrt{\frac{{{(10-30)}^{2}}+{{(20-30)}^{2}}+{{(30-30)}^{2}}+{{(40-30)}^{2}}+{{(50-30)}^{2}}}{5}}, \\ | = & \sqrt{\frac{{{(10-30)}^{2}}+{{(20-30)}^{2}}+{{(30-30)}^{2}}+{{(40-30)}^{2}}+{{(50-30)}^{2}}}{5}}, \\ | ||

= & 14.1421 | = & 14.1421 | ||

\end{align}</math> | \end{align}\,\!</math> | ||

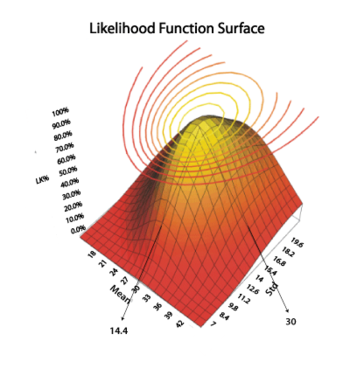

A look at the likelihood function surface plot in the figure below reveals that both of these values are the maximum values of the function. | A look at the likelihood function surface plot in the figure below reveals that both of these values are the maximum values of the function. | ||

Latest revision as of 19:13, 15 September 2023

MLE Statistical Background

If [math]\displaystyle{ x\,\! }[/math] is a continuous random variable with pdf:

- [math]\displaystyle{ \begin{align} f(x;{{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}}), \end{align}\,\! }[/math]

where [math]\displaystyle{ {{\theta }_{1}},\,\! }[/math] [math]\displaystyle{ {{\theta }_{2}},\,\! }[/math][math]\displaystyle{ ...,\,\! }[/math] [math]\displaystyle{ {{\theta }_{k}}\,\! }[/math] are [math]\displaystyle{ k\,\! }[/math] unknown constant parameters that need to be estimated, conduct an experiment and obtain [math]\displaystyle{ N\,\! }[/math] independent observations, [math]\displaystyle{ {{x}_{1}},\,\! }[/math] [math]\displaystyle{ {{x}_{2}},\,\! }[/math][math]\displaystyle{ ...,\,\! }[/math] [math]\displaystyle{ {{x}_{N}}\,\! }[/math], which correspond in the case of life data analysis to failure times. The likelihood function (for complete data) is given by:

- [math]\displaystyle{ L({{x}_{1}},{{x}_{2}},...,{{x}_{N}}|{{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}})=L=\underset{i=1}{\overset{N}{\mathop \prod }}\,f({{x}_{i}};{{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}})\,\! }[/math]

- [math]\displaystyle{ \begin{align} i=1,2,...,N \end{align}\,\! }[/math]

The logarithmic likelihood function is:

- [math]\displaystyle{ \Lambda =\ln L=\underset{i=1}{\overset{N}{\mathop \sum }}\,\ln f({{x}_{i}};{{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}})\,\! }[/math]

The maximum likelihood estimators (MLE) of [math]\displaystyle{ {{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}},\,\! }[/math] are obtained by maximizing [math]\displaystyle{ L\,\! }[/math] or [math]\displaystyle{ \Lambda .\,\! }[/math] By maximizing [math]\displaystyle{ \Lambda ,\,\! }[/math] which is much easier to work with than [math]\displaystyle{ L\,\! }[/math], the maximum likelihood estimators (MLE) of [math]\displaystyle{ {{\theta }_{1}},{{\theta }_{2}},...,{{\theta }_{k}}\,\! }[/math] are the simultaneous solutions of [math]\displaystyle{ k\,\! }[/math] equations such that:

- [math]\displaystyle{ \frac{\partial (\Lambda )}{\partial {{\theta }_{j}}}=0,j=1,2,...,k\,\! }[/math]

Even though it is common practice to plot the MLE solutions using median ranks (points are plotted according to median ranks and the line according to the MLE solutions), this is not completely accurate. As it can be seen from the equations above, the MLE method is independent of any kind of ranks. For this reason, many times the MLE solution appears not to track the data on the probability plot. This is perfectly acceptable since the two methods are independent of each other, and in no way suggests that the solution is wrong.

Illustrating MLE Using the Exponential Distribution

- To estimate [math]\displaystyle{ \widehat{\lambda }\,\! }[/math] for a sample of [math]\displaystyle{ n\,\! }[/math] units (all tested to failure), first obtain the likelihood function:

- [math]\displaystyle{ \begin{align} L(\lambda |{{t}_{1}},{{t}_{2}},...,{{t}_{n}})= & \underset{i=1}{\overset{n}{\mathop \prod }}\,f({{t}_{i}}) \\ = & \underset{i=1}{\overset{n}{\mathop \prod }}\,\lambda {{e}^{-\lambda {{t}_{i}}}} \\ = & {{\lambda }^{n}}\cdot {{e}^{-\lambda \underset{i=1}{\overset{N}{\mathop{\sum }}}\,{{t}_{i}}}} \end{align}\,\! }[/math]

- Take the natural log of both sides:

- [math]\displaystyle{ \Lambda =\ln (L)=n\ln (\lambda )-\lambda \underset{i=1}{\overset{n}{\mathop \sum }}\,{{t}_{i}}.\,\! }[/math]

- Obtain [math]\displaystyle{ \tfrac{\partial \Lambda }{\partial \lambda }\,\! }[/math], and set it equal to zero:

- [math]\displaystyle{ \frac{\partial \Lambda }{\partial \lambda }=\frac{n}{\lambda }-\underset{i=1}{\overset{n}{\mathop \sum }}\,{{t}_{i}}=0\,\! }[/math]

- Solve for [math]\displaystyle{ \widehat{\lambda }\,\! }[/math] or:

- [math]\displaystyle{ \hat{\lambda }=\frac{n}{\underset{i=1}{\overset{n}{\mathop{\sum }}}\,{{t}_{i}}}\,\! }[/math]

Notes About [math]\displaystyle{ \widehat{\lambda }\,\! }[/math]

Note that the value of [math]\displaystyle{ \widehat{\lambda }\,\! }[/math] is an estimate because if we obtain another sample from the same population and re-estimate [math]\displaystyle{ \lambda \,\! }[/math], the new value would differ from the one previously calculated. In plain language, [math]\displaystyle{ \hat{\lambda }\,\! }[/math] is an estimate of the true value of ... How close is the value of our estimate to the true value? To answer this question, one must first determine the distribution of the parameter, in this case [math]\displaystyle{ \lambda \,\! }[/math]. This methodology introduces a new term, confidence bound, which allows us to specify a range for our estimate with a certain confidence level. The treatment of confidence bounds is integral to reliability engineering, and to all of statistics. (Confidence bounds are covered in Confidence Bounds.)

Illustrating MLE Using the Normal Distribution

To obtain the MLE estimates for the mean, [math]\displaystyle{ \bar{T},\,\! }[/math] and standard deviation, [math]\displaystyle{ {{\sigma }_{T}},\,\! }[/math] for the normal distribution, start with the pdf of the normal distribution which is given by:

- [math]\displaystyle{ f(T)=\frac{1}{{{\sigma }_{T}}\sqrt{2\pi }}{{e}^{-\tfrac{1}{2}{{\left( \tfrac{T-\bar{T}}{{{\sigma }_{T}}} \right)}^{2}}}}\,\! }[/math]

If [math]\displaystyle{ {{T}_{1}},{{T}_{2}},...,{{T}_{N}}\,\! }[/math] are known times-to-failure (and with no suspensions), then the likelihood function is given by:

- [math]\displaystyle{ L({{T}_{1}},{{T}_{2}},...,{{T}_{N}}|\bar{T},{{\sigma }_{T}})=L=\underset{i=1}{\overset{N}{\mathop \prod }}\,\left[ \frac{1}{{{\sigma }_{T}}\sqrt{2\pi }}{{e}^{-\tfrac{1}{2}{{\left( \tfrac{{{T}_{i}}-\bar{T}}{{{\sigma }_{T}}} \right)}^{2}}}} \right]\,\! }[/math]

- [math]\displaystyle{ L=\frac{1}{{{({{\sigma }_{T}}\sqrt{2\pi })}^{N}}}{{e}^{-\tfrac{1}{2}\underset{i=1}{\overset{N}{\mathop{\sum }}}\,{{\left( \tfrac{{{T}_{i}}-\bar{T}}{{{\sigma }_{T}}} \right)}^{2}}}}\,\! }[/math]

then:

- [math]\displaystyle{ \Lambda =\ln L=-\frac{N}{2}\ln (2\pi )-N\ln {{\sigma }_{T}}-\frac{1}{2}\underset{i=1}{\overset{N}{\mathop \sum }}\,\left( \frac{{{T}_{i}}-\bar{T}}{{{\sigma }_{T}}} \right)_{}^{2}\,\! }[/math]

Then taking the partial derivatives of [math]\displaystyle{ \Lambda \,\! }[/math] with respect to each one of the parameters and setting them equal to zero yields:

- [math]\displaystyle{ \frac{\partial (\Lambda )}{\partial \bar{T}}=\frac{1}{\sigma _{T}^{2}}\underset{i=1}{\overset{N}{\mathop \sum }}\,({{T}_{i}}-\bar{T})=0\,\! }[/math]

and:

- [math]\displaystyle{ \frac{\partial (\Lambda )}{\partial {{\sigma }_{T}}}=-\frac{N}{{{\sigma }_{T}}}+\frac{1}{\sigma _{T}^{3}}\underset{i=1}{\overset{N}{\mathop \sum }}\,{{({{T}_{i}}-\bar{T})}^{2}}=0\,\! }[/math]

Solving the above two derivative equations simultaneously yields:

- [math]\displaystyle{ \bar{T}=\frac{1}{N}\underset{i=1}{\overset{N}{\mathop \sum }}\,{{T}_{i}}\,\! }[/math]

and:

- [math]\displaystyle{ \begin{align} & \hat{\sigma }_{T}^{2}= & \frac{1}{N}\underset{i=1}{\overset{N}{\mathop \sum }}\,{{({{T}_{i}}-\bar{T})}^{2}} \\ & & \\ & {{{\hat{\sigma }}}_{T}}= & \sqrt{\frac{1}{N}\underset{i=1}{\overset{N}{\mathop \sum }}\,{{({{T}_{i}}-\bar{T})}^{2}}} \end{align}\,\! }[/math]

It should be noted that these solutions are valid only for data with no suspensions, (i.e., all units are tested to failure). In the case where suspensions are present or all units are not tested to failure, the methodology changes and the problem becomes much more complicated.

Illustrating with an Example of the Normal Distribution

If we had five units that failed at 10, 20, 30, 40 and 50 hours, the mean would be:

- [math]\displaystyle{ \begin{align} \bar{T}= & \frac{1}{N}\underset{i=1}{\overset{N}{\mathop \sum }}\,{{T}_{i}} \\ = & \frac{10+20+30+40+50}{5} \\ = & 30 \end{align}\,\! }[/math]

The standard deviation estimate then would be:

- [math]\displaystyle{ \begin{align} {{{\hat{\sigma }}}_{T}}= & \sqrt{\frac{1}{N}\underset{i=1}{\overset{N}{\mathop \sum }}\,{{({{T}_{i}}-\bar{T})}^{2}}} \\ = & \sqrt{\frac{{{(10-30)}^{2}}+{{(20-30)}^{2}}+{{(30-30)}^{2}}+{{(40-30)}^{2}}+{{(50-30)}^{2}}}{5}}, \\ = & 14.1421 \end{align}\,\! }[/math]

A look at the likelihood function surface plot in the figure below reveals that both of these values are the maximum values of the function.

This three-dimensional plot represents the likelihood function. As can be seen from the plot, the maximum likelihood estimates for the two parameters correspond with the peak or maximum of the likelihood function surface.